Exercise - Add Verses

Now that you’ve learned how to add stanzas and verses to your configuration, let’s try to add new verses on your own.

Exercise 1 - Add new verse - SegmentSizeDrift

In this exercise, let's define a SegmentSizeDrift verse. This verse configures Mona to find segments, whose size differs significantly between a target dataset and a benchmark dataset.

In our case, we want to check if a specific "city", "purpose" or bucket of "approved_amount" is decreasing in the number of records in the last 2 weeks, compared to the previous 6 weeks.

You can add this verse under the same stanza, or create a new one - your choice.

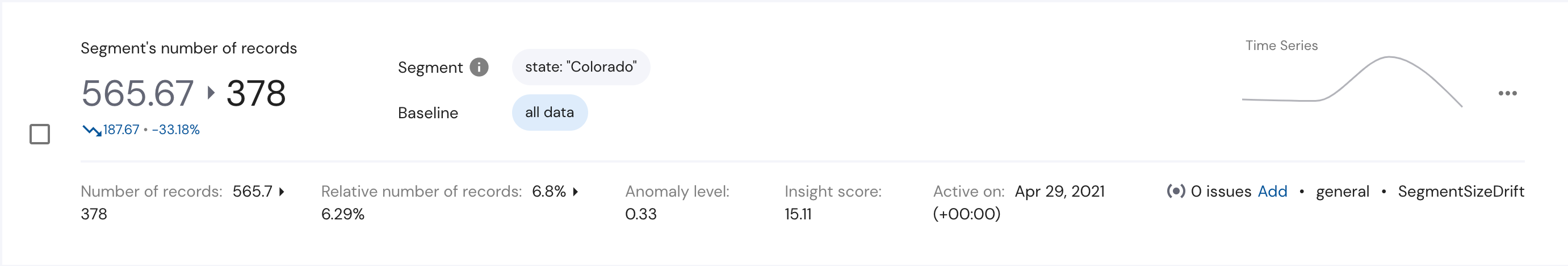

insights for this verse should look like this:

See Solution

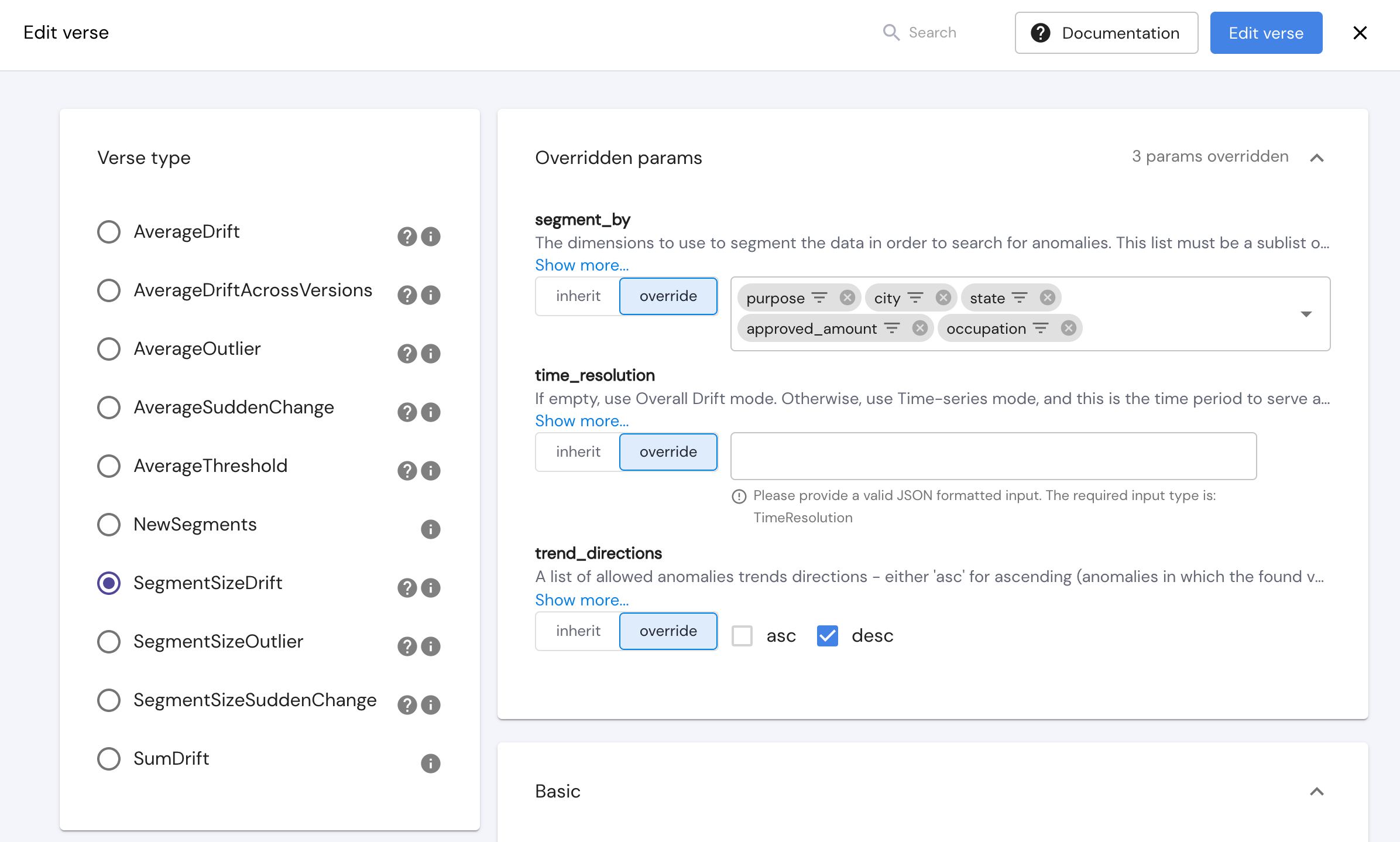

In the config GUI

OverAll Mode

In order to use OverAll Mode we set the time_resolution to be an empty string.

Read more about OverAll Mode here.

Or in the JSON config file

{

"stanzas": {

"stanza_name": {

"verses": [

{

"type": "SegmentSizeDrift",

"segment_by": [

"city",

"purpose",

"state",

"occupation",

"approved_amount"

],

"baseline_segment": {

"stage": [

{

"value": "inference"

}

]

},

"trend_directions": [

"desc"

],

"time_resolution":""

}

]

}

}

}

Automatic insightsOnce a verse is defined, Mona will automatically search for insights for this verse and will send you an email once the new insights are ready

Exercise 2 - Add new verse - AverageDriftAcrossVersions

In this exercise, let's define an AverageDriftAcrossVersions verse. This verse configures Mona to find significant changes in behavior when updating system/model versions. It finds segments in which a metric's average differs significantly between a target and benchmark data sets - which are both defined by using a version field and defining which of its possible values is in each data set.

In our case, we want to track the average of the following metrics:

"credit_score", "offered_amount", "approved_amount", "credit_label_delta" and "offered_approved_delta_normalized".

We want to track these metrics in different segments of our data, using several segmentations, such as: "occupation", "purpose", "stage", and "city".

We want to check if these metrics behave differently between the latest model version when compared the previous versions, defined by the "model_version" field.

You can add this verse under the same stanza, or create a new one - your choice.

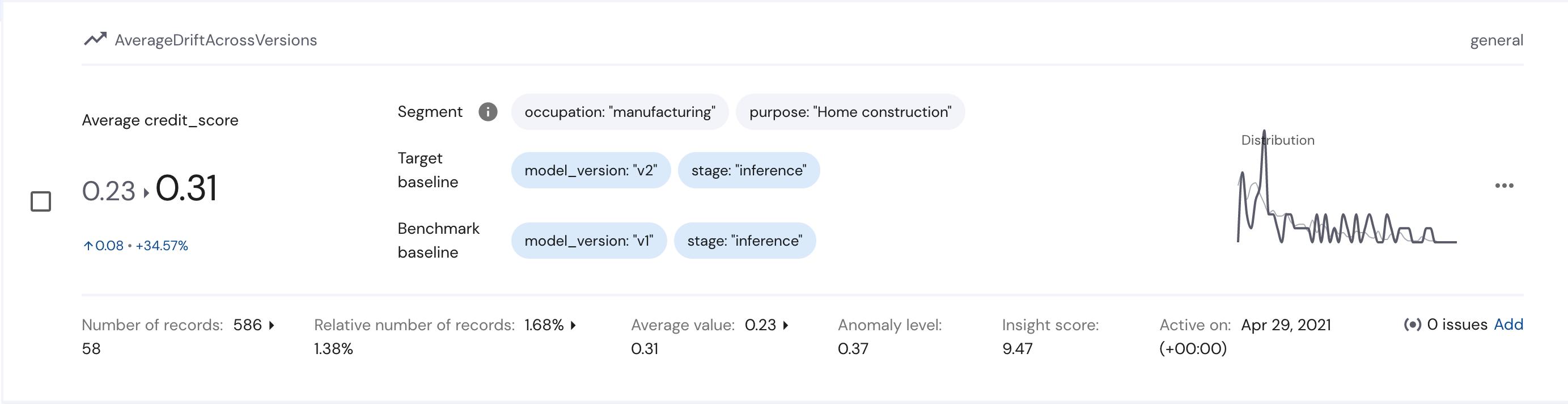

Insights for this verse should look like this:

See Solution

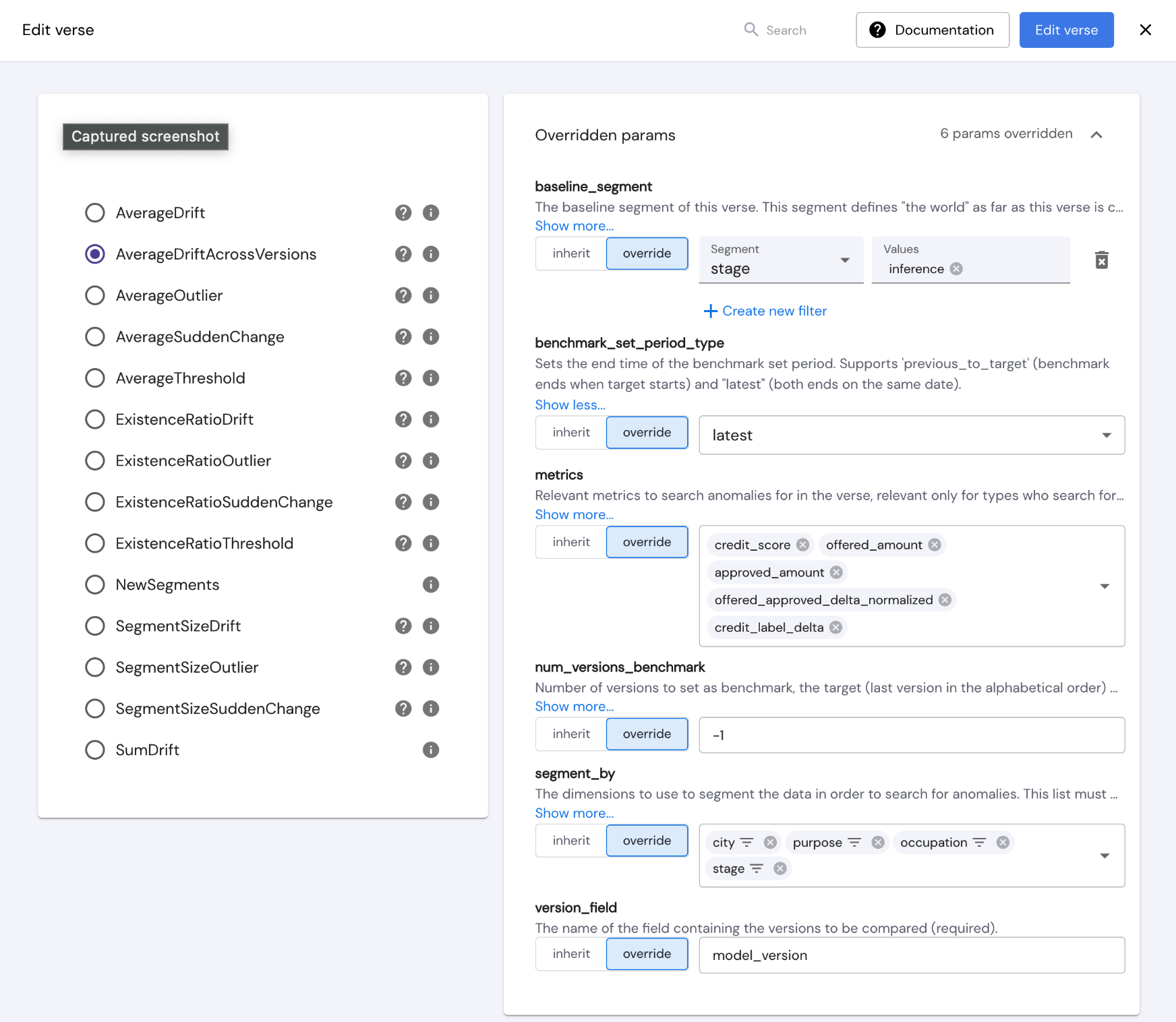

The AverageDriftAcrossVersions verse requires a few more params:

- "benchmark_set_period_type" - Sets the end time of the benchmark set period. Since both model versions are of the same dates and are not following, we will use - "latest".

- "num_versions_benchmark": Number of versions to set as benchmark. -1 will set the benchmark to be ALL previous versions

- "version_field": The name of the field containing the versions to be compared (required). In this case we use "model_version"

In the config GUI

Or in the JSON config file

{

"stanzas": {

"stanza_name": {

"verses": [

{

"type": "AverageDriftAcrossVersions",

"metrics": [

"credit_score",

"offered_amount",

"approved_amount",

"credit_label_delta",

"offered_approved_delta_normalized"

],

"segment_by": [

"city",

"purpose",

"stage",

"occupation"

],

"baseline_segment": {

"stage": [

{

"value": "inference"

}

]

},

"benchmark_set_period_type": "latest",

"num_versions_benchmark": -1,

"version_field": "model_version"

}

]

}

}

}Exercise 3 - Add new verse - Drift between train and inference

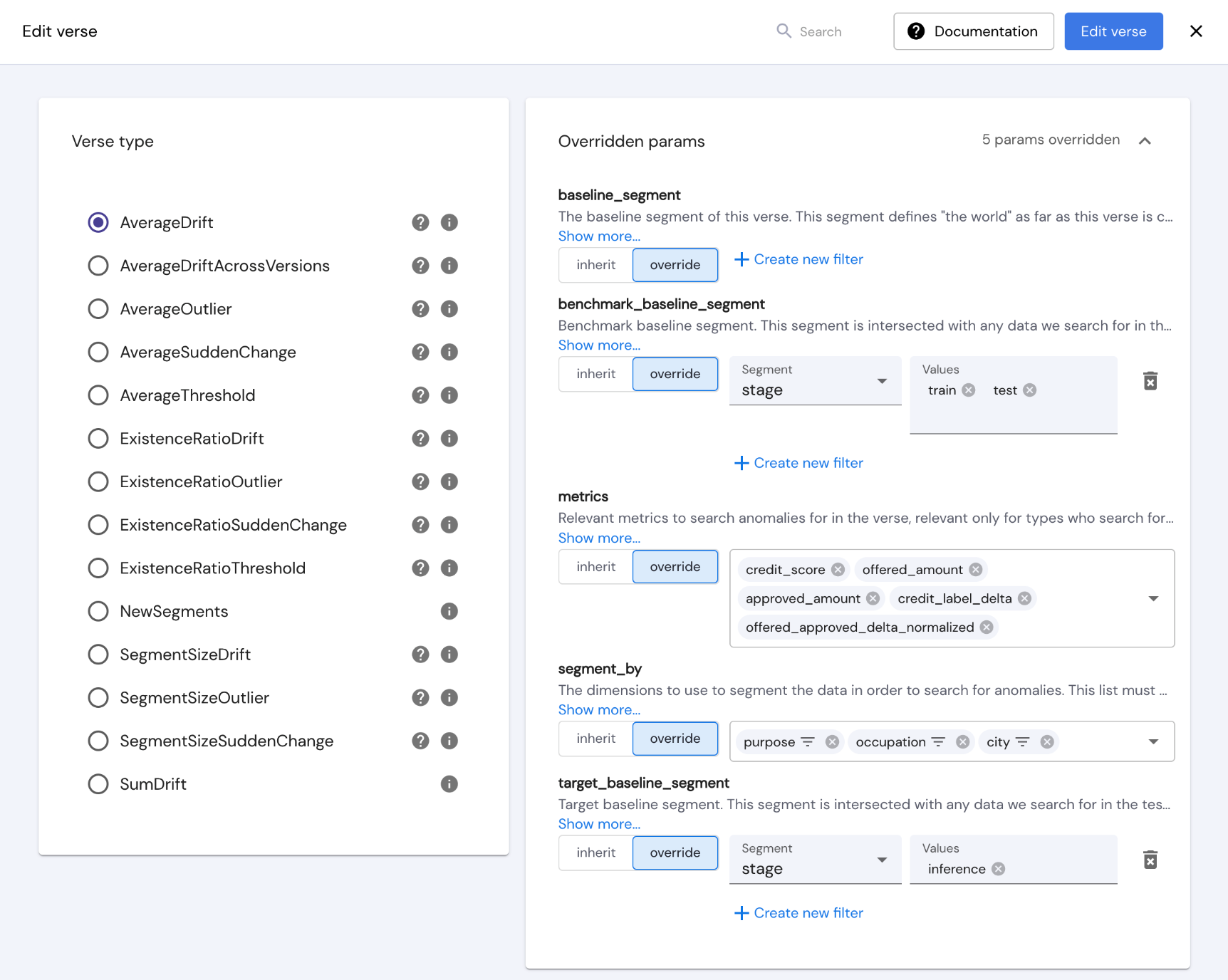

In this exercise, let's define an AverageDrift verse that checks for drifts between train and inference data. To this end, we will use the regular AverageDrift verse which configures Mona to find segments, in which a metric's average differs significantly between a target and benchmark data sets - and we will set those as inference and train data respectively.

In our case, we want to track the average of the following metrics:

"credit_score", "offered_amount", "approved_amount", "credit_label_delta" and "offered_approved_delta_normalized".

We want to track these metrics in different segments of our data, using several segmentations, such as: "occupation", "purpose" and "city".

You can add this verse under the same stanza, or create a new one - your choice.

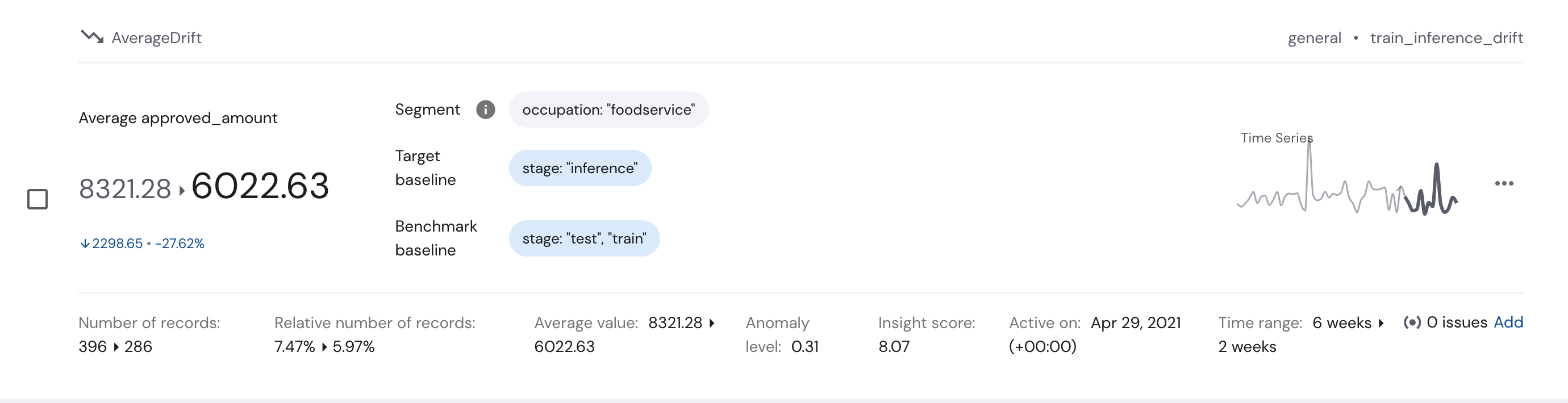

insights for this verse should look like this:

See Solution

To set the target and benchmark data sets, we use the following params and set different values of the "stage" field:

- "benchmark_baseline_segment": as we have both "train" and "test" data, we will set both as the value.

- "target_baseline_segment": here we will set "inference" as the baseline segment.

As we used the "baseline_segment" param set to "inference" in the stanza level, we want this verse to overwrite that value with an empty string so that there will be no overall baseline.

In the config GUI

{

"stanzas": {

"stanza_name": {

"verses": [

{

"type": "AverageDrift",

"metrics": [

"credit_score",

"offered_amount",

"approved_amount",

"credit_label_delta",

"offered_approved_delta_normalized"

],

"segment_by": [

"purpose",

"occupation",

"city"

],

"benchmark_baseline_segment": {

"stage": [

{

"value": "train"

},

{

"value": "test"

}

]

},

"target_baseline_segment": {

"stage": [

{

"value": "inference"

}

]

},

"baseline_segment": {}

}

]

}

}

}Or in the JSON config file

References:\ SegmentSizeDrift\ AverageDrift\ AverageDriftAcrossVersions

Updated 3 months ago